I was halfway through a documentary about ancient Rome last night when I stopped and asked myself: why am I watching this? I’m not into Roman history. I hadn’t searched for it. I didn’t even know it existed until Netflix autoplay decided this was exactly what I needed. And here’s the embarrassing part – I was genuinely enjoying it. The algorithm knew what I wanted better than I did.

This happens constantly. I buy books Amazon recommends without reading the synopsis. I click articles Google surfaces without checking the source. I try restaurants Yelp suggests without asking friends. Somewhere along the line, I stopped making my own choices and started letting the math make them for me. My feeds curate what I see, recommendation engines decide what I discover, and personalization algorithms shape what seems available. The platforms I use daily essentially tell me to read more about certain topics, watch specific shows, buy particular products, and I just… do it. I’m trying to figure out when I handed over this much control.

When did we start trusting the algorithm

My friend Rachel works in data science. Over coffee last Saturday, I asked why I keep accepting algorithmic recommendations without pushback. Her explanation was both fascinating and disturbing.

Humans are actually terrible at making decisions when faced with too many options. It’s called choice paralysis. When you’re scrolling through thousands of movies, hundreds of products, endless articles, your brain gives up. The cognitive load becomes overwhelming. So when an algorithm says “based on everything we know about you, THIS is probably what you want,” it’s offering relief from decision fatigue.

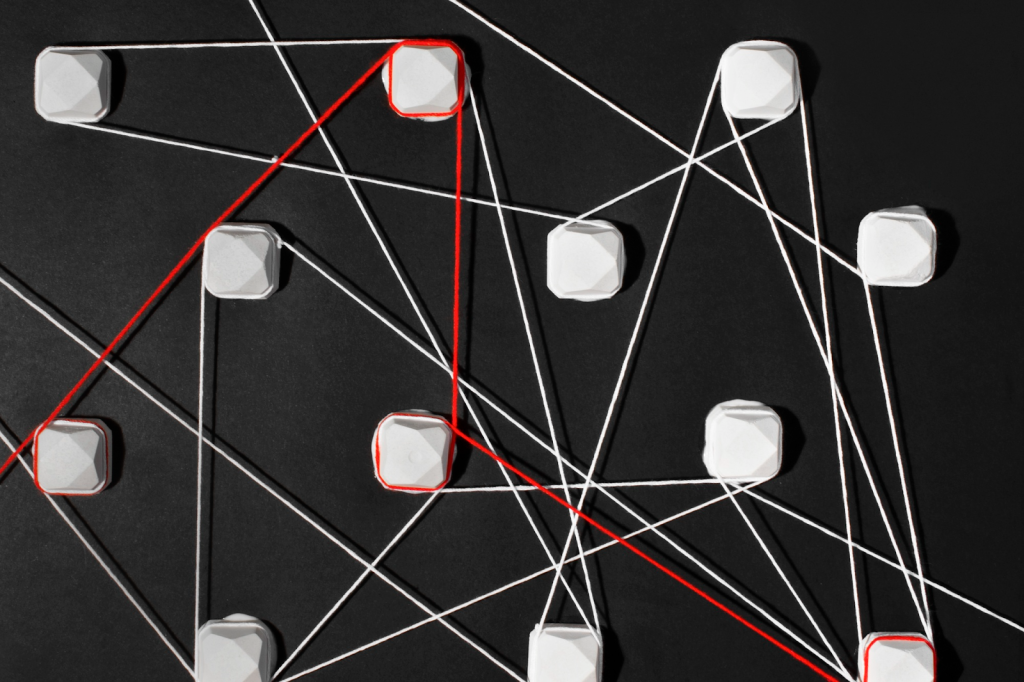

But algorithms aren’t showing us what’s best – they’re showing us what’s most likely to keep us engaged. Those are fundamentally different goals. The recommendation might not be the highest quality content or best value product. It’s just the thing most likely to make you click, watch, buy. The feedback loop is what really stuck with me. The system collects more data the more we follow its suggestions. The more information it has, the better it can guess what we’ll accept.

How platforms affect the decisions we make

I began to understand how different services subtly guide the choices we make:

| Type of platform | How it helps you decide | What it prioritizes |

| Streaming services | “Because you watched” sections, personalized thumbnails, and autoplay that starts the next episode automatically | Maximizing watch time and retaining subscribers |

| Online stores | Limited-time deals, “frequently bought together” bundles, and sponsored product placements | Encouraging purchases and increasing cart value |

| Social media | Algorithmic feeds that push trending topics, high-engagement posts, and suggested accounts to the top | Extending session length and boosting interaction rates |

| News platforms | Endless scrolling, personalized story suggestions, and bundles of related articles | Increasing clicks, impressions, and time on page |

| Search engines | Autocomplete suggestions, featured snippets, and personalized ranking results | Driving ad clicks and measuring user satisfaction signals |

When you look at this, you can see the pattern. Every platform steers me toward choices benefiting their metrics. I discovered a book my algorithm would never surface. Found a restaurant my app didn’t recommend. Watched a movie not “optimized for me” and loved it because it challenged my preferences.

The weird thing is how quickly I wanted to go back. Making choices required effort. The algorithm offers the path of least resistance.

When guidance becomes control

There’s a point where suggestion becomes manipulation. When YouTube queues up increasingly extreme versions of whatever you’re watching, is that service or radicalization?

My brother got sucked in last year. Started watching one conspiracy video ironically. The algorithm served increasingly extreme content. Six months later, he’s dropping false claims into dinner conversation, citing “research.” Videos the algorithm fed him based on what kept him watching, not what was true. That scared me. The algorithm doesn’t care about truth or wellbeing. It cares about engagement. And we’ve been conditioned to trust its recommendations as neutral, helpful.

Finding balance

Some recommendations are genuinely useful. Spotify’s Discover Weekly has introduced me to artists I love. Google Maps’ suggestions have led me to great meals. The key is maintaining awareness. When I accept a recommendation, I ask: am I choosing this because I evaluated it? Or because it was placed in front of me?

I’ve started doing exercises. Once a week, I deliberately search outside my algorithmic bubble. I ask actual humans for recommendations. Small resistance against algorithmic capture. Rachel suggested thinking of algorithms as tools, not oracles. They’re useful for narrowing options. But they shouldn’t be the only input. Your own curiosity, judgment – those still matter. That ancient Rome documentary appears again in my suggested content. The algorithm remembers I watched it once. Maybe I do want more. Or maybe it’s just good at making me think I do. I click play anyway. Old habits.